AI Agents for Executive Search:

From Software to Service

Designing agents that generate complete research reports

instead of just helping users build them, cutting weeks of work down to a final review.

From supporting work to delivering outcomes

This project is a shift from software that helps users do the work to agents that deliver it for them.

Researchers typically spend weeks building detailed documents for clients: analyzing target companies, mapping the competitive landscape, and defining who the ideal person looks like for each role.

Instead of just helping researchers build these documents faster, we're designing agents that generate completed drafts. Researchers review, refine, and deliver. The role shifts from builder to editor.

Leading agent-first design

I lead design from early framing through ongoing iteration, defining agent workflows, information architecture, and design patterns that build user trust in agent outputs. I work closely with Product, Engineers, and partners from one of the world's leading global firms. The core questions we keep coming back to: how do users stay in control, what happens when the agent fails, and how does the system earn trust over time.

To accelerate validation, I build working coded prototypes using our design system with AI, making it possible to test how agent interactions actually feel at runtime.

Why we started

Executive search researchers run research for senior roles, like a CFO or board member, on behalf of their clients.

For every search, they map the market, build and score a candidate list, and produce deliverables that the client reviews. Every step requires judgment. They have to stand behind every output that reaches a client.

Our Executive Search Platform found strong adoption. But faster wasn't enough. Researchers were still building every deliverable from scratch, every stage, every time. The effort hadn't changed, just the speed.

What adoption revealed

Productivity gains made users faster, but didn't reduce the work itself

It became clear which repeated deliverables agents could generate

Real impact comes from agents delivering completed documents, not just supporting the process

Moving from support to delivery

Adoption alone wasn't enough. Real impact meant changing how work is delivered, not just how efficiently users can do it themselves. That's where AI agents came in: generate the documents, let users review and refine. We designed for the full research lifecycle, end to end.

Goals

The goal was simple: go beyond helping users build the work, and start delivering it for them. Agents deliver outcomes users can confidently act on. Users start from a finished draft, not a blank page. They guide, review, and refine.

Approach & collaboration

Defining an agent-first model

This project represents a shift from SaaS workflows. It means defining how agents and users work together. The challenge isn't just designing screens. It's figuring out how much the agent does on its own, how users stay in control, and how the system earns trust over time.

SaaS

100% user

Assistant

Agents help

Agent

10% user, 90% agent

I worked closely with Product, engineers, and cross-functional stakeholders, including our co-innovation partner, to align on how an agent-first system should behave. We worked through this in whiteboarding sessions and concept reviews, testing assumptions early before committing to a direction.

Key questions included:

How much autonomy should agents have?

How do users stay confident in work they didn't build themselves?

How do we balance visibility with simplicity when work happens over hours or days?

Core design principles

These principles kept us on track as we designed into the agent-first model.

Agents work, UI supports

Agents do the work. The UI provides context, review, and confirmation.Outcome over workflow

Users engage in the final 10% of work, reviewing and approving rather than building from scratch.The system remembers, so users don't have to

Context and history stay visible so users can pick up where they left off.Chat is the history

Agents explain what happened and capture context and decisions made throughout the process.

These principles kept the focus on what mattered: agent experiences users could trust.

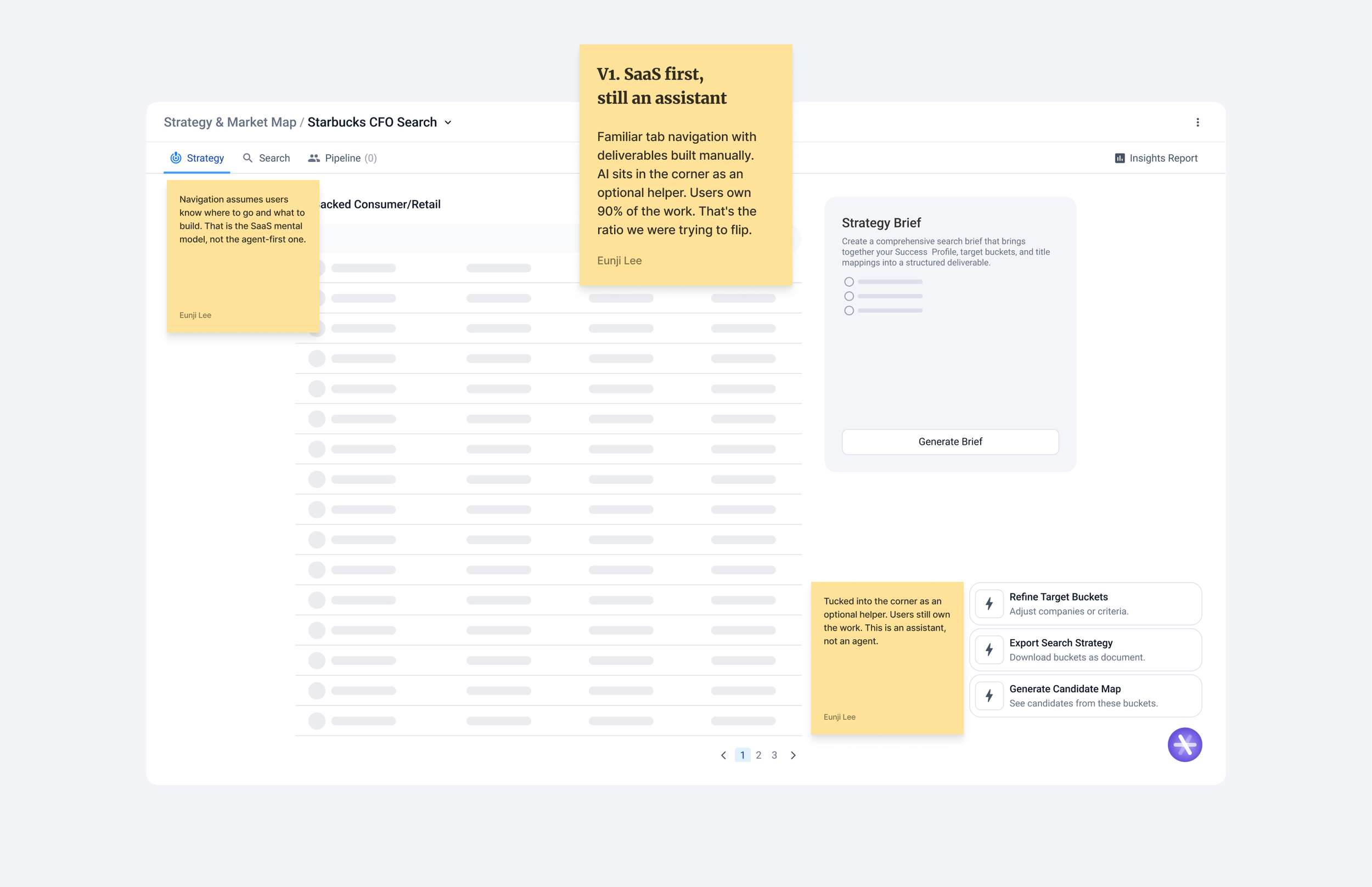

From SaaS to agent-first

Exploring directions of what the interface should tell users when the agent is doing the work.

Exploration V1

V1 followed a familiar SaaS pattern. Users navigated between tabs, built deliverables manually, and used an AI assistant button in the corner when they needed help. But that was the model we were trying to move away from. We needed to flip the relationship. Agents deliver work, not just support it.

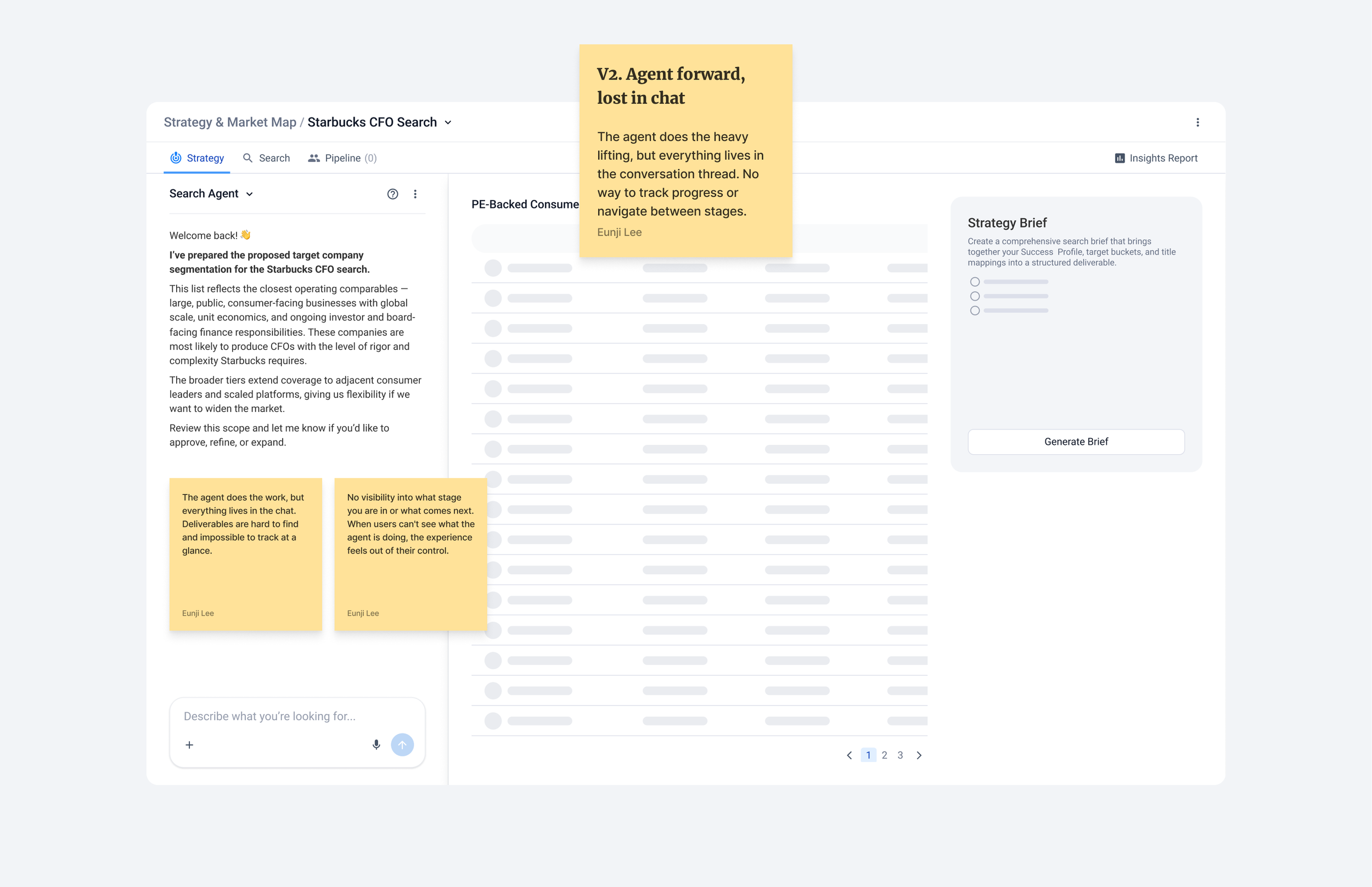

Exploration V2

V2 put the agent conversation front and center. The 90% agent, 10% user ratio started to feel achievable. But deliverables were buried inside the conversation thread. Users had no way to see where they were or what came next. When progress is invisible, the experience feels out of their control.

Decided Direction

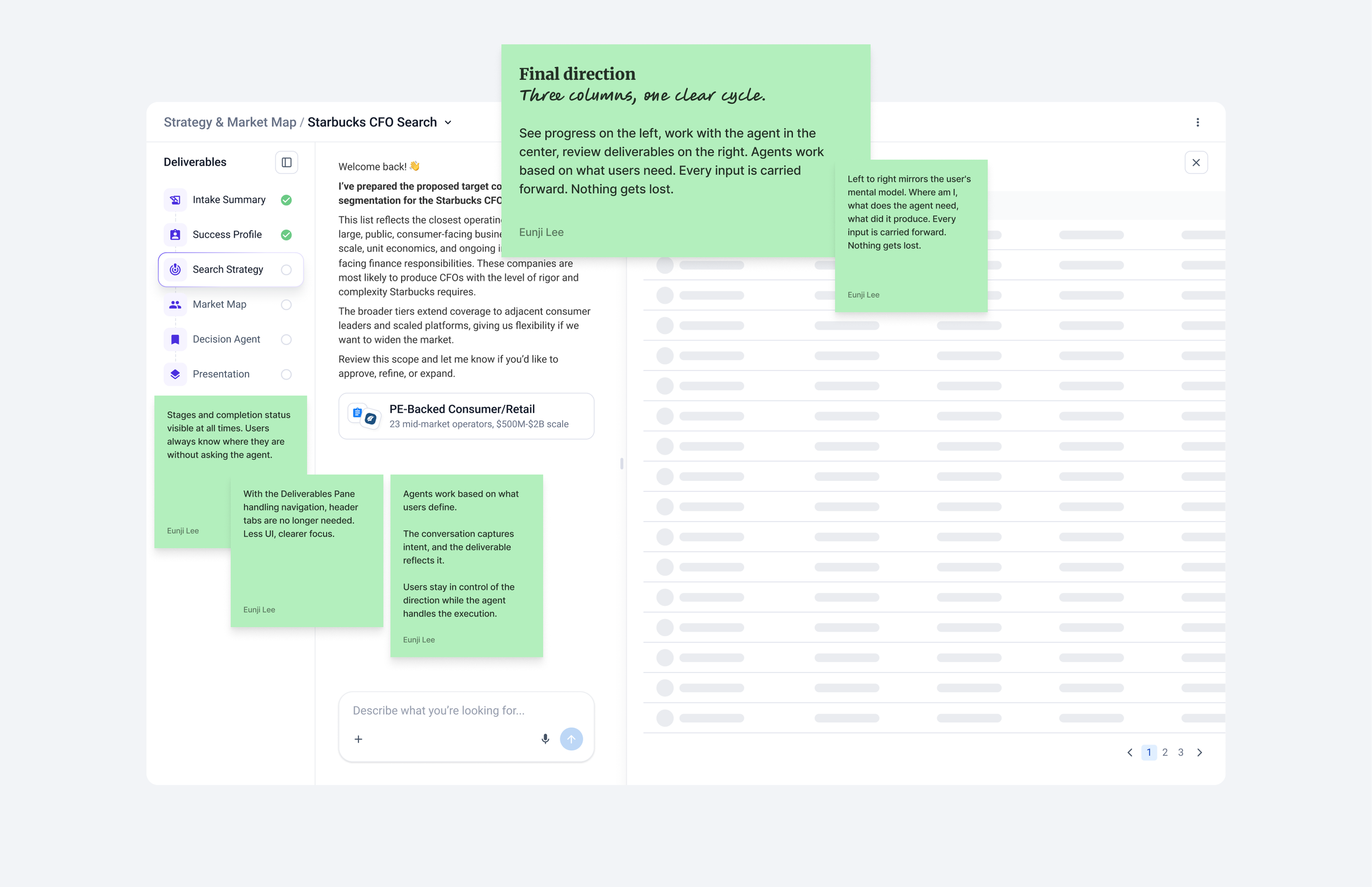

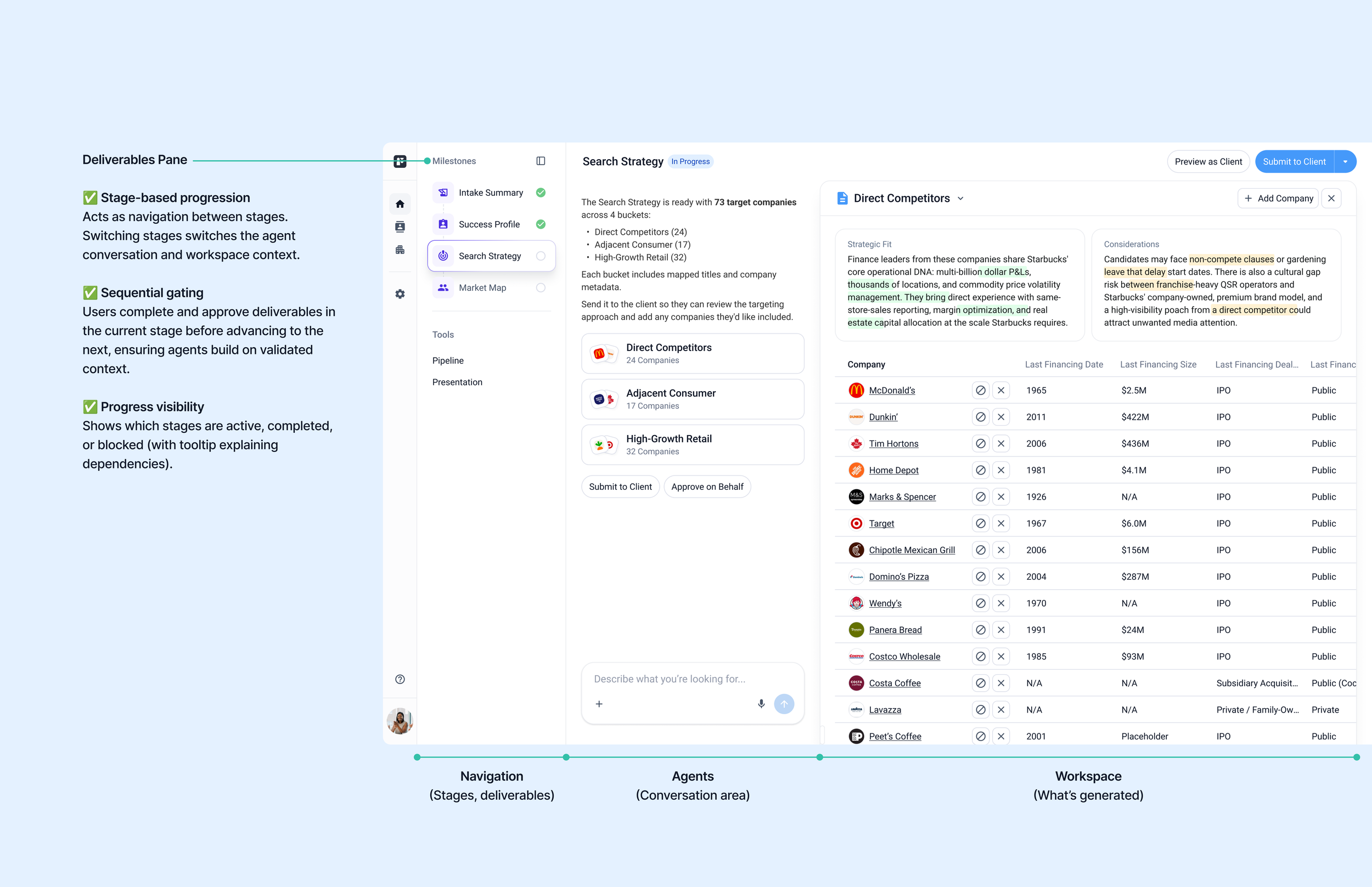

The current direction adds a deliverable milestones panel on the left, showing stages and progress. It shows progress and lets users navigate between stages.

With the panel handling navigation, we removed the header tabs entirely. Tabs implied the user was navigating features. Milestones implied they were moving through work. That distinction changed how the product felt.

The three-column layout creates a clear cycle: see where you are, work with the agent, review the deliverable. Every input is carried forward. Users stay in control of the direction while the agent handles the work

Structure and navigation

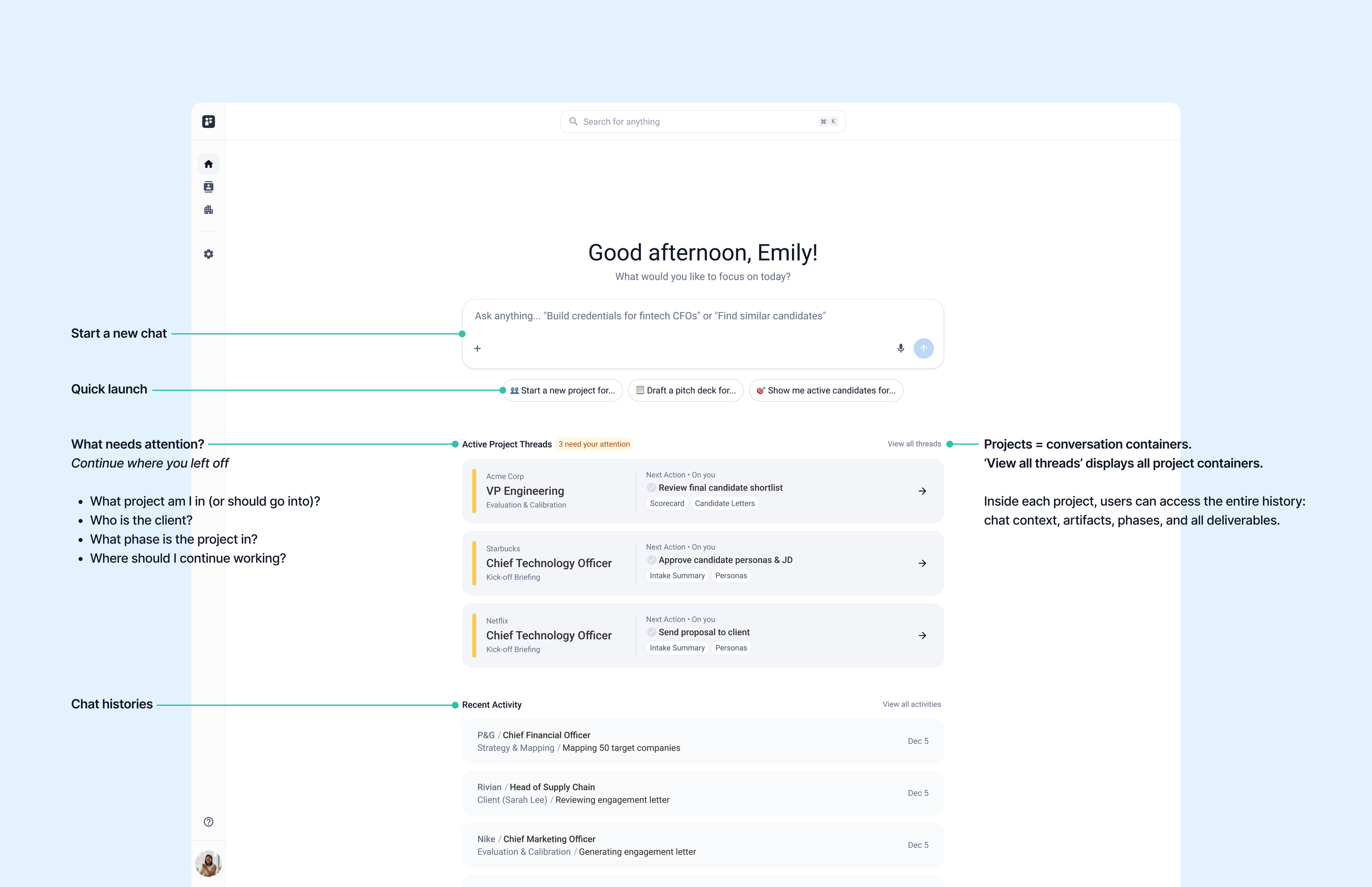

To support the shift from building to reviewing, I designed an information architecture organized around projects as work threads.

Each project guides users through a sequence of stages: Intake Summary → Success Profile → Search Strategy → Market Map → Pipeline → Presentation. Instead of navigating through tools, users move through conversations with agents.

At each stage, agents generate outputs based on context from previous stages and the ongoing conversation. Users review, refine, approve, and move to the next stage.

Sequential progression

Users move through stages in order, each building on the previous work. Stages act as both the workflow structure and navigation.Context building through stages

Each approved stage feeds into the next. The agent gets smarter and builds on what users have confirmed, so the work stays enriched and aligned throughout.Clear progress tracking

The system shows which stages are active, completed, or blocked, so users always know where they are.

This approach keeps agents doing the heavy lifting, while users always know when to step in, review, refine, and stay in control.

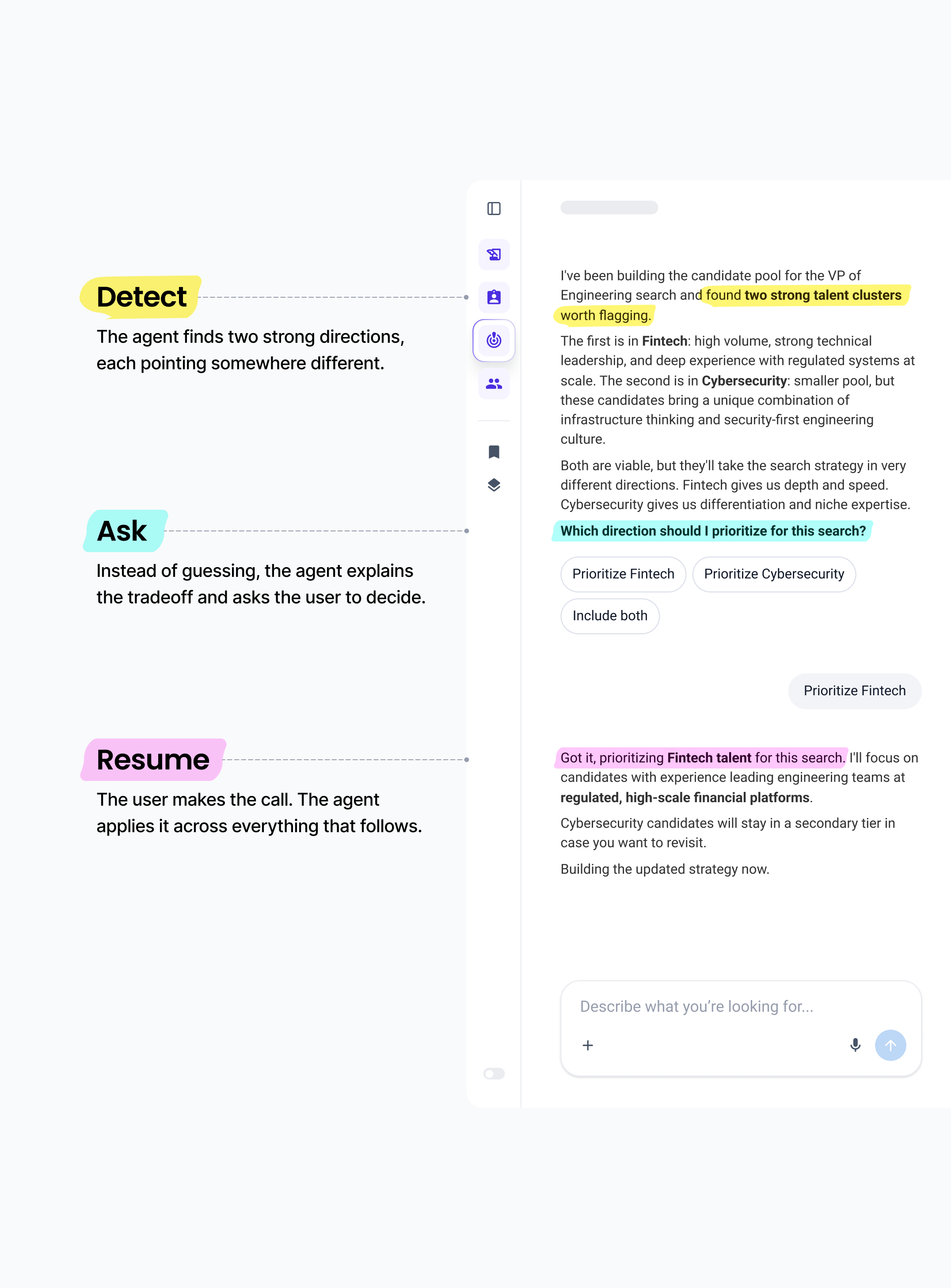

Designing for ambiguity: the clarification loop

The biggest risk in shifting users from builders to editors is silent failure. When an agent guesses wrong, trust breaks, and users fall back to doing the work manually. The mental migration stops.

The Clarification Loop is how we prevent that. Instead of guessing when it hits ambiguity, the agent pauses and asks.

The logic follows three steps:Detect: The agent hits a point where it can't confidently move forward. For example, a brief mentions "strong communicator" but two very different profiles could match.

Ask: Instead of picking one, the agent surfaces the conflict and asks for direction.

Resume: The user makes a quick call. The agent applies that direction across the entire document, not just the single instance.

This is what builds trust over time.

Users stay in control without redoing the work. And every successful loop makes it a little easier to keep going.

Design evolution

I moved into designing each stage of the search journey. Every stage follows the same cycle: the agent generates a completed draft, the user reviews and refines, and the approved context carries forward to the next stage.

Converting designs to code

I build working coded prototypes using Claude, Codex, VS Code, and GitHub, integrating APIs and voice functionality to test how interactions actually feel at runtime. The prototypes are built on our design system, which keeps everything consistent and production-ready from the start.

Beyond testing, they become a shared reference for product, design, and engineering, so everyone knows exactly how it should work before production code is written.

Key interactions, built in code

Showing the work as it happens

While the agent generates a deliverable, it surfaces which sources it consulted in real time. This was a direct response to a trust gap. Researchers needed to see where the work came from to feel confident in the output and defend it to clients.

Reviewing and approving deliverables

The agent builds each deliverable, and the researcher reviews, refines, and either approves or submits it to the client. Approving moves the project to the next milestone.

Each approved deliverable feeds context into the next stage, so the agent gets smarter as the work progresses. Users stay in control of direction while the agent handles the work.

Voice mode for hands-free interaction

Researchers can switch to voice mode to describe changes, give feedback, or run bulk actions without touching the keyboard. Useful when reviewing deliverables, moving between meetings, or just thinking out loud. Voice keeps the workflow moving without pulling users back to a typing interface every time they have a thought.

Feedback that improves the agent

AI responses include thumbs feedback. A thumbs down opens a short prompt asking what was off. The response is logged, routed to an internal Jira board for the team to review, and fed back into model training. The agent gets better at the things researchers actually flag.

Submitting to the client

Researchers submit exactly what they see directly to the client. The client lands on a clean, focused view with only the deliverable. No conversation history, no internal UI. Clients can add comments directly on the deliverable. The researcher reviews the feedback and works with the agent to act on it. Everyone stays in the loop without leaving the platform.

The client surface has no chat, by design. Giving clients the same interface as researchers would have blurred the roles. The researcher directs the agent. The client reviews what the researcher decides to share. Keeping those surfaces separate protected that distinction.

Submitting to the client:

Client reviews and leaves feedback:

Where we are now

Designs across all stages have been implemented and launched with a subset of researchers across our partner firms. We're actively expanding.

Early usage showed researchers moving through full milestone sequences and using agent-generated drafts as real starting points, not as output they discard and rewrite. That was the core signal we were watching for: not whether researchers could use it, but whether they trusted it enough to.

“I am incredibly impressed by the agentic work they are doing. I can see it as an opportunity for my fellow Researchers and I to be forward-deployed, shepherding the AI and the platform as it helps execute research and setting strategy.

This looks to be huge in unlocking potential for the Research team to assist in additional areas. Excited to see where this goes!”

– Senior Researcher, global executive search firm

What's next

We're expanding to more users across our partner firms. As more context builds up, the agent gets smarter. We're actively monitoring where it gets things wrong, improving our eval criteria, and training it to do better over time. The real measure is whether people trust it enough to let it do the work.